The most important change to the bid stream this month is not curation. It is filtering.

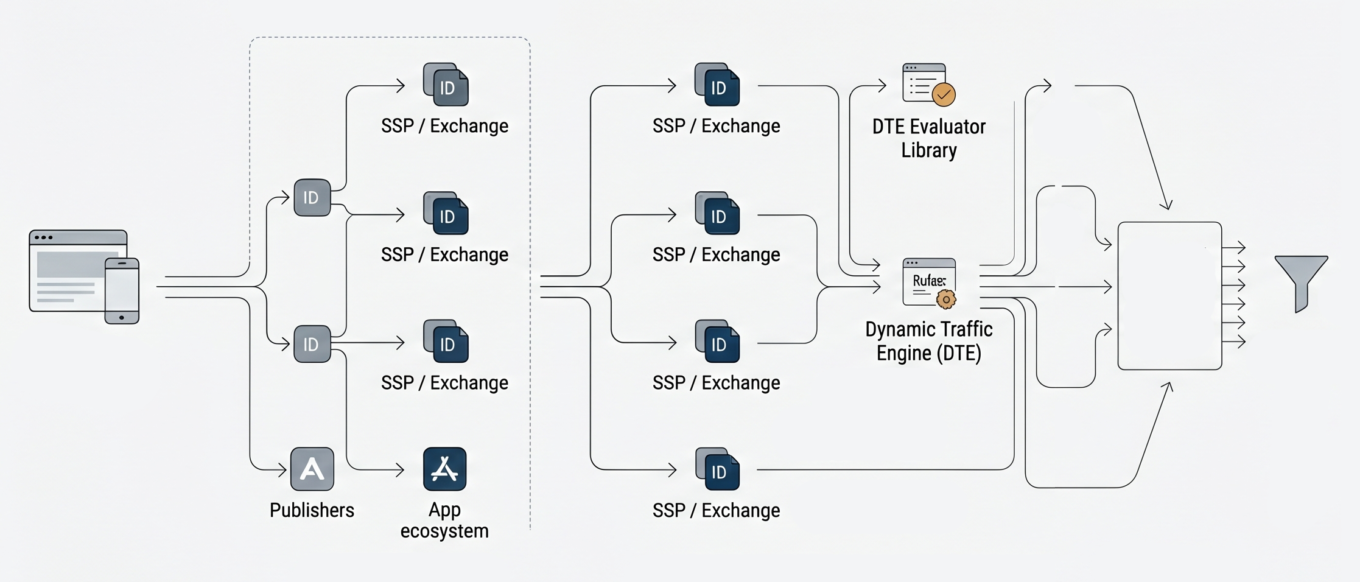

On April 15, the IAB Tech Lab announced that Amazon Ads is donating its Dynamic Traffic Engine, or DTE, as open-source code. DTE is a pre-bid filtering layer. It lets a DSP publish its preferences (ad formats, positions, regions, refresh rates, time-of-day weights, deal ID exclusions) to a cloud-hosted rules engine. SSPs read those rules through an evaluator library and shape their traffic accordingly, forwarding or filtering each impression before it ever reaches the bidder.

The mechanics are simple. The DSP signals what it wants. The SSP listens and shapes the bid stream accordingly. Infrastructure gets streamlined. Listening costs drop. Bid density on the impressions that matter goes up. The traffic that would have been thrown away on the DSP’s side never leaves the SSP’s side in the first place.

I have been waiting for something like this for almost a decade. The reason why says more about where the industry is heading than about Amazon.

We Solved This Once

In 2015, I was one of the early adopters of nToggle. Header bidding had broken the economics of the bid stream. A single impression was being multiplied across exchanges at a rate the buy side was never built to absorb, and the duplication was not accidental. It was the incentive structure. Publishers earned more yield by listing the same impression with more SSPs. SSPs earned more by passing more requests downstream. Intermediaries in the middle had every reason to manipulate bid request attributes (IP addresses, cookies, MAIDs, device IDs) and resend the same opportunity in different costumes to inflate bid density. None of that produced new inventory. It just produced more requests for the same inventory, with worse signal.

For the DSP, that meant the cost of evaluating the bid stream stopped being a question of compute. It became a question of decisioning time. A bidder that has to evaluate the same impression six different ways across six different SSPs spends most of its budget filtering before it can even ask the real question, which is whether this impression is worth bidding on at all, for which campaign, at what investment level. The faster the bid stream grows, the more of that decisioning budget gets burned on rejection rather than on selection.

nToggle solved for that by sitting between the exchange and the bidder, compressing queries per second by shaping traffic before it hit the decisioning engine. Rubicon Project acquired them in 2017 for $38.5 million, integrated the technology into the SSP stack, and the founders took an appropriate exit. But the gap they were filling never went away. It got worse. The bid stream grew, the duplication grew, the bad actors arbitraging the supply chain grew, and the industry never produced another shared, infrastructure-layer answer for almost a decade

That gap is what DTE walks into. It is not a revolutionary idea. It is the right idea, finally being delivered in the right shape; as a protocol the buy side can publish to, rather than a private business one DSP has to acquire to use.

What Actually Belongs Upstream

The architectural principle behind both nToggle and DTE is the same: the filter belongs in front of the bidder, not inside it.

When you push filtering logic into the bidder itself, you have already paid for the bid request. You have already burned the compute. You have already added latency to the auction. The decision to discard that impression comes after the cost has been incurred. That is not optimization. That is triage.

When the filter sits at ingress, between the SSP’s signal and the DSP’s bidder, every decision compounds. Bad traffic gets suppressed before it consumes infrastructure. Good traffic gets prioritized because the SSP has been told what shape of traffic the bidder is interested in, without being told the specific audiences, MAIDs, deal IDs, or campaign objectives the bidder is actually targeting. That distinction matters. As DSPs and SSPs converge and compete, the buy side has every reason to keep its targeting logic out of the supply side’s reach. A protocol that lets a DSP shape traffic without exposing the underlying decisioning logic is the difference between a feedback loop and a leak.

Different supply has different shape. CTV is not mobile in-app. Mobile in-app is not audio. Audio is not web display. Geography matters. Ad unit type matters. In-game inventory does not behave like news inventory.

A filter at the protocol layer can express rules for each, run across every SSP that implements the spec, and stay coherent under a single buy-side strategy. A filter inside one SSP cannot.

Filter it at the source, or deal with it later. Those are the options.

The Curation Detour

In recent years, the industry has been applying the wrong mechanism to solve it.

Every company claiming to care about “quality inventory” has shipped roughly the same answer: curation. Package inventory into a deal ID, wrap it with some data, label it premium, sell it downstream. The deal ID becomes a proxy for quality. The label becomes a proxy for decisioning.

Think of it like cable bundles. You want a few channels. You get fifty. The package is marketed around the good ones, but the pricing reflects the whole bundle. The weaker inventory rides along with the stronger.

That is curation. The label is “premium.” The economics are bundling.

I have been formalizing my own perspective on curation for a while; its pros and cons, the stakeholders, their motivations, where it actually adds value and where it adds margin while pretending to add value. The short version: SSP curation is a packaging layer, not a decisioning layer. It applies optimization logic in isolation, per SSP. Data gets bundled into CPMs at unclear cost. Frequency and sequencing get fragmented across curated deals that cannot talk to each other. If it’s in the deal, it’s assumed to be high quality. It’s not actually measured. Buyers end up paying a premium for the label without receiving unified optimization across tactics.

The deeper problem is that curation is wasteful by design. It bundles low-quality inventory with higher-quality opportunities the same way a structured product bundles weak credits with strong ones. It limits the capabilities that brands and agencies entrust buy-side platforms to apply on their behalf. It introduces blended fees that hide arbitrage from the parties relying on the tool. The DSP has fiduciary responsibility to the advertiser. Every layer that abstracts decisioning away from the DSP and into a curated package is a layer that weakens that responsibility.

DTE does not work this way. It does not live inside a deal ID. It does not embed data upstream at hidden cost. It is a rules engine for what the bidder actually wants to see, enforced at the request level, in real time, across every SSP. Quality enforcement happens at ingress. Decisioning stays with the buyer.

Amazon Open-Sourced It

At Marketecture Live earlier this year, I contributed to the conversation around AWS’s RTB Fabric announcement. The thesis behind RTB Fabric is straightforward: move supply validation and transparency controls to the ingress layer, shape traffic before it hits the bidder, and run it on cloud infrastructure that lets specific components scale independently of entire systems.

Early adopters have seen data transfer costs drop by more than eighty percent, integration timelines compress from months to days, and bid rates increase as the inventory more closely aligns with what the bidder is actually looking for. Less listening. More winning. Latency tightens. The ingress layer does what the ingress layer is supposed to do.

DTE is a natural extension of that thesis. A DSP-side rules engine, a sell-side evaluator library, and a filter that sits between them. Different implementation, same architectural instinct: enforce quality before the bidder sees the request.

I have confirmed that DTE will be available as a module within RTB Fabric. That is not the surprising part. The surprising part is that AWS did not stop there. They could have made DTE Fabric-only. They could have made it a competitive moat for AWS-hosted exchanges and a reason for the SSPs not yet on Fabric to migrate. Instead, they open-sourced the protocol and donated it to the IAB Tech Lab, on top of building it into Fabric.

That is a more interesting move than it gets credit for. The usual argument for open-sourcing is reach: give it to a neutral body so participants who would not otherwise touch a proprietary stack can adopt it. But most of the major SSPs are already running on AWS. Many have already adopted RTB Fabric. The exchanges that are not on Fabric are exactly the accounts AWS’s adtech division is structurally incentivized to pursue, because that is the entire commercial logic of having an adtech division inside a cloud provider in the first place. Keeping DTE proprietary would have helped that pursuit.

Open-sourcing it does the opposite. It gives a competitive asset to the entire industry, including the exchanges AWS would otherwise be selling against. The most plausible read is that Amazon decided protocol adoption matters more than infrastructure lock-in. Filtering only works when the entire ecosystem implements the same spec. A DTE that lives only inside Fabric is a DTE that filters traffic for a fraction of the bid stream. A DTE that lives inside the IAB Tech Lab is a DTE that can shape traffic across all of it.

The bet AWS appears to be making is that the value of being the cloud where the standard runs natively is greater than the value of being the cloud where the standard is exclusive.

Governance Finally Has a Venue

The donation lands at a moment when the IAB Tech Lab has, for the first time in a long time, a credible mechanism for actually coordinating cross-industry adoption.

In December, the Tech Lab stood up the Programmatic Governance Council, co-chaired by OMD’s Ben Hovaness alongside three sell-side executives covering web, in-app, and CTV. Founding members include Omnicom, WPP, Dentsu, Disney, Amazon Ads, The Trade Desk, Magnite, PubMatic, Hearst, News Corp, Yahoo, Raptive, and Mediavine. As reported by AdExchanger, the PGC has met four times since December and now meets twice a month. The remit is straightforward: give industry stakeholders a structured venue to work through technical and commercial disagreements, rather than letting them play out in LinkedIn threads and conference panels.

The first set of recommendations is expected within 90 to 120 days. Bid duplication and QPS bloat are at the top of the list, by Hovaness’s own framing. The ANA estimates roughly $20 billion in waste on the open web annually, with only 36 cents of every programmatic dollar reaching the publisher once fees, hops, and infrastructure costs are accounted for. Bid duplication alone accounts for about five cents of every programmatic dollar; one-sixth of total ad tech costs, attached to a behavior that produces no benefit for buyers or publishers.

DTE landing at IAB Tech Lab the same quarter the PGC is actively prioritizing those exact problems is not a coincidence in timing, even if it is one in coordination. It places the protocol inside the venue that has the membership and the mandate to drive multilateral adoption. Past transparency efforts have often fallen short because individual companies tried to dictate change unilaterally. The PGC is built specifically to avoid that failure mode. DTE benefits from arriving on its desk early.

Index Cloud and the Other Direction

As I was drafting this piece, Index Exchange announced Index Cloud, a different swing at a related problem. Partners can deploy their own containers, including full DSP bidders, directly inside Index’s infrastructure. Impression-level signals hit the container, the partner’s logic runs, a decision is returned, and the whole execution window is under five milliseconds. The stated goal is the same as DTE’s: bring intelligence closer to the impression, reduce wasted compute, eliminate the public-cloud round trip.

Index was explicit about the architecture. Index Cloud is not built on AWS, GCP, or Azure. It is purpose-built infrastructure, and the announcement specifically argues that “running adjacent to the exchange is not the same as running inside it.” That is a real position. DTE says: publish your preferences, let the SSP filter at ingress, keep the decisioning layer with the buyer. Index Cloud says: move the decisioning layer inside the exchange, and we will host it for you.

Credit where it is due. Index has taken one of the only – possibly the only – genuinely unbiased stances among the major exchanges. They have stayed pure supply-side and built toward that. Compare that to PubMatic with Activate or Magnite with Clearline, where the SSP is actively competing with its own buyers. Index Cloud, viewed in isolation, is a logical extension of that supply-side commitment.

But the model has a structural ceiling. Index is one exchange. A very good one, with real scale and quality, but one of many. A DSP that runs its bidding logic inside Index’s walls is not optimizing across the market. It is specializing inside one source of supply. Every signal it gains on Index inventory is a signal it might not have on Magnite, PubMatic, OpenX, FreeWheel, or any direct publisher integration. Every auction it wins inside Index is an auction whose counterfactual – the same user reachable on another path – it cannot see.

This is the same problem curation creates, one layer up. Curation fragments optimization across packaged deals. Container-hosted bidders fragment optimization across individual exchanges. The concept of building bidding logic across multiple SSPs and keeping it coherent and in sync, when each instance lives inside a different exchange’s infrastructure, runs against everything DSPs are built to do. You cannot do holistic cross-supply decisioning from inside one supply source. The moment your richest signal set lives inside one SSP’s environment, that SSP becomes the gravitational center of your optimization logic. Everywhere else gets a thinner version of your model. In Bedrock’s case as the flagship Index Cloud partner, “thinner” is doing some heavy lifting. It is entirely dependent on Index’s inventory. The rest of the market is invisible.

Frequency and sequencing are the obvious casualties of both curation and containerized-DSPs.

DTE does not have this problem because it is a horizontal protocol rather than a vertical environment. A DSP can run different filtering logic for different channels, different geographies, different ad formats – CTV gets one rule set, mobile in-app gets another, audio gets a third – and have all of them propagate horizontally across every SSP that implements the spec. Optimization stays centralized with the buyer. Supply paths remain comparable. The decisioning layer does not get absorbed into the supply layer.

That is the architecture that preserves buy-side independence. Curation moves optimization into the SSP via the deal ID. Index Cloud moves optimization into the SSP via the container. Both are attempts, intentional or not, to make the SSP a more valuable decisioning surface at the expense of the DSP’s role as the unified layer. DTE runs the other way. It sharpens filtering while keeping decisioning exactly where it belongs: with the DSP.

Adoption or Irrelevance

A few things will tell us whether DTE becomes infrastructure or becomes a footnote.

First, SSP adoption. The evaluator library has to run inside the exchanges, and the exchanges have to decide whether cooperating with a pre-bid filter serves their interests or undermines their competitive edge against other supply sources still relying on higher QPS and the yield that comes with it, whatever the cost to the buyer. The Fabric integration helps. The PGC helps more, because it gives SSPs a multilateral venue to negotiate the terms of cooperation rather than be asked to volunteer it.

Second, DSP rule publication. If the major DSPs publish thin, generic rule sets, DTE will not provide enough signal to meaningfully shape traffic. If they publish rich, campaign-aware rule sets, the system starts producing real efficiency gains within quarters, not years. The open-source governance model makes this more likely, as DSPs that would have hesitated to publish detailed rule sets into a cloud run by a competitor have fewer reasons to hesitate when the protocol is governed by a neutral standards body.

Third, the PGC’s first set of recommendations. The 90–120 day timeline gives the market something concrete to align against. If the PGC produces substantive recommendations on bid duplication and QPS bloat, and the founding members commit to implementation, DTE has a tailwind. If the recommendations stall or get watered down to something every member can sign without changing anything, DTE will still exist, but it will exist as a tool the most diligent buyers use rather than as the standard the industry runs on.

Traffic shaping was right in 2015. nToggle did not need to keep building it as an independent business. They exited, the technology was absorbed into Rubicon, and the gap they left behind grew with the bid stream. The industry needed another answer, and for a long time, it did not get one. In 2026, we have a protocol instead of a product. That is a different shape of solution, with different adoption dynamics, but it is the shape the problem actually requires.

Quality enforced at ingress. Decisioning owned by the buy side. Filtering logic that propagates horizontally across every SSP, without handing the rules of the auction to any party with a structural interest in shaping them.